Monday 2026-06-08

06:00 AM

Funniest/Most Insightful Comments Of The Week At Techdirt [Techdirt]

This week, our first place winner on the insightful side is an anonymous comment offering a theory about ICE’s addiction to masks:

Let me suggest a different reason…

Let’s say that all of those J6 dumbfucks who livestreamed their crimes, and who now have received pardons for acting like trailer trash are looking to start rebuilding their pathetic lives.

Let’s also say that they feel burned by the Trump administration for letting them hang out to dry for 4 years instead of pardoning them in 2020. I’m assuming he thought of them as ‘contractors.’

I’m suggesting that ICE has a hardon for masks because that’s the reward for the J6’ers who got fucked for 4 years – ICE just let them apply, and blam! You have an ICE agency chock full of the garbage of America who have a boner for revenge, but if anyone compares them to J6 footage, they’d be fucked 6 ways to Sunday.

It’s not like security clearances make any difference to this clown car of fuckups.

Unmask those pieces of shit and let’s start comparing faces. I’ll bet once you dig a little, you’ll start to smell the white trash like the Proud Boys, OathKeepers, and all those other MAGAs with ‘small dick syndrome.’

In second place, it’s Stephen T. Stone hammering home the most important point about the Bricks and Minifigs saga:

Dear everyone involved with this story:

This could have been a few emails between lawyers.

For editor’s choice on the insightful side, we start out with a reply from dfbomb to the first place winning comment above:

This lines up with my observations that ICE’s behaviors with license plates, car stealing and general fuckery matches the patterns of the Boogaloo and Proud Boys that came to Minneapolis during George Floyd to start a race war.

They even target the same neighborhoods.

Next, it’s Nathan F with a comment about the Supreme Court’s transparently racist double standard on voting rights:

And Roberts still wonders why no one likes the SCOTUS or believes they make good, resonable, well thought out decisions?

Over on the funny side, our first place winner is j with a reaction to our piece on the LEGO dispute:

Wowsers…. There needs to be a length of story warning at the beginning.

I’m used to the normal two to five paragraphs on here. But eighteen page down presses later… just a heads up would be nice.

In second place, it’s Thad raising an eyebrow about one word in a line we wrote about Lindsey Halligan:

Lindsey Halligan — managed to set fire to pretty much everything she touched before deciding to exit to the DOJ.

“deciding”

For editor’s choice on the funny side, we start out with an anonymous reply to a commenter who spotted a “Luigi” bumper sticker:

Glad that Mario’s brother is finally getting some recognition for helping to take down the evil boss in the mushroom kingdom.

Finally, it’s an anonymous comment about Greg Bovino’s not-so-subtle Nazi salute:

I think there is an innocent explanation for that gesture: Bovino was trying to make himself look taller.

That’s all for this week, folks!

04:00 AM

Take a pause, meditate [F-Droid - Free and Open Source Android App Repository]

This Week in F-Droid

TWIF curated on Friday, 05 Jun 2026, Week 23

Community News

AirPlay Server, AirPlay receiver implementation with video and audio support, was just added pairing with Mirror for streaming between devices or straight from other AirPlay compatible devices out there.

Bold Bitcoin Wallet has a huge update to 4.0.0, you’ll just have to read for yourself here.

FairEmail was updated to 1.2318 and now it requires Android 6 or later.

Inner Breeze was updated to 1.5.0 as the last version, the upstream source repo was archived. But, the app has a new start, as we’ve included Inner Breeze, yes same name, same developer, but different appid. If you’ve had the app installed before, better switch now to get updates.

MakeACopy – OCR Latin (Best) was archived as MakeACopy, updated to 4.3.0 this week, already covers its use case. One less app to keep installed.

While the Briar apps are in maintenance mode for a while now, another team has built an app on their protocol. The newly included Zerion, Private messaging over Tor. No phone, no email. Now with channels., promises a lot of features. Since we’ve added the latest version you can read the explainer post and maybe peek at the older posts too for more in-depth knowledge.

@shuvashish76 feeds our FOMO:

App Manager celebrates 6 years of existence in a dev post. While we don’t track you, the user, we have some downloads stats (main server only) here or here or here, that might answer some dev questions.

DetoxDroid: Digital Detoxing as Your New Default was updated to 2.4.0 helping you doomscroll less. No, you don’t need AI or the latest greatest phone released yesterday, DetoxDroid can help you take pauses from the digital realm and keep you in control… of you.

Mullvad had a new security assessment of the app with minimal fixes needed.

Prav developers explain how the service is funded and make an appeal for donations to keep the sign-in function alive. If you believe in decentralized, open protocols for communication, built on FLOSS, Prav and its upstream Quicksy, are the entry points for your less tech-savvy friends, and they need your help.

Torrent Search was updated to 0.5.0 with many changes like, torrent details screen, better browser, view markers, better filtering, categories and more.

wallabag was updated to 2.6.0 after a two year pause, with translations updates, fixes and more.

Archived Apps

2 more apps were archived

- AndroidCrypt: AES Crypt compatible file encryption utility (It use the non-free aescrypt lib)

- Hermes Agent: Run local AI models with chat, files, voice, and Android tools. (Replacement coming soon!)

Newly Added Apps

36 more apps were newly added

- 2026 Football Fixtures Widget: 2026 football fixtures and widgets.

- Acho: Lightweight WebView client for Acho Chat

- Acoustic: Simple internet radio app

- Albi: A health tracking app that respects your privacy

- Android IP Camera: An MJPEG IP Camera app

- Appstract Icon Pack: Abstract icon pack with 590 hand-designed icons

- Autu Mandu: Control the income and expenses of your vehicles

- Backlog Tracker: Calculate, track, and defeat compounding academic backlogs

- C2K — Couch to 5K & 10K: C25K/C210K running trainer. No Google, no tracking.

- Collective Club Maze: A game similar to the board game ricochet robots

- Compound Calculator: Simple offline compound interest calculator

- duckAssist: Lightweight, privacy-focused WebView wrapper for Duck.ai

- Extend: Extend is an all-purpose display manager for any physical or virtual display

- Foldio: A music player that treats folders as playlists

- Forkgram Classic: A Telegram fork preserving the classic UI from before the Liquid Glass era

- FoxAppMemo: Track, rate and tag your apps with memos. No internet required.

- iBeacon Tasker Plugin: Scan iBeacon advertisements from Tasker tasks

- iSpindle Plotter: Collect and plot fermentation data from an iSpindel hydrometer

- Magnifica: A magnificent magnifier

- Mirror: Screen mirroring manager with support for AirPlay, Moonlight, and DisplayLink

- MobiloSignal: Find the best spot for calls and mobile internet by seeing signal strength

- Mood Cairns: Private, fully offline mood tracker. No network access. Your data’s yours.

- NOVA: Open-source client for Unraid® NAS servers

- Offline Currency Converter: Convert currencies offline with support for 160+ currencies

- Otoscope: Ad-free viewer for cheap WiFi otoscope cameras

- Quedalle: Quedalle in ads, tracking, accounts. Just a tile launcher.

- ShizuWall: Lightweight no root, no VPN firewall solution powered by Shizuku

- SrednaBG: Real-time average speed inside Bulgaria’s average-speed camera zones

- UWR Planer: Match planning for underwater rugby – the UWR Planer app

- Valencia Transit Reloaded: Transit info for the metropolitan area of Valencia and Alicante

- Vegan Inspector: A barcode scanner that checks if products are vegan or vegetarian

- Voki Bot: Automation tool

- Voyager: Material Design 3 file browser with SFTP, FTP, SMB, and WebDAV support

- WakeUpScreen: Wake your screen gently the moment a notification arrives

- Weather: Weather app with Material You design

- WhatsOpen: Open WhatsApp chats with any number, no contact needed

Updated Apps

276 more apps were updated

(expand for the full list)- AAAAXY was updated to

1.7.77+20260602.4036.8ed3d156 - AELF - Bible and day’s reading was updated to

2.10.0 - Agora was updated to

1.0.10 - Aisleron Shopping List was updated to

2026.4.0 - AlexCalc was updated to

1.0.16 - AliasVault was updated to

0.29.3 - Amber was updated to

6.2.0 - AndBible: Bible Study was updated to

5.1.1099 - Andor’s Trail was updated to

0.8.16.1 - AniSync was updated to

1.8.0 - AnLinux was updated to

6.73 Stable - AnyMemo was updated to

10.12.2 - ArcaneChat was updated to

2.51.0 - Areada was updated to

1.1.2 - Aria for Misskey was updated to

1.5.3 - aTalk was updated to

6.1.0 - Autasker was updated to

1.1.0 - Aves Libre was updated to

1.14.5 - baresip was updated to

83.0.0 - baresip+ was updated to

72.0.0 - BasicSync was updated to

2.0 - Beam was updated to

1.5 - BeatBridge was updated to

1.0.32 - Binary Eye was updated to

1.73.1 - BinEd - Hex Editor was updated to

0.2.10 - Birthday Adapter was updated to

3.12.2 - BleOta was updated to

2.0.3 - Blichess was updated to

8.0.0+ble2.5.1 - BlockDrop was updated to

1.0.34 - BookWyrm was updated to

1.3.9 - Braincup was updated to

2.18.0 - Breakout 71 was updated to

29675190 - BusTO was updated to

2.6.46 - Butterfly was updated to

2.5.2 - Calendar was updated to

v2.5.0 - Cantonese Keyboard - Jyutping was updated to

0.62.0 - Casio G-Shock Smart Sync was updated to

42.0 - Cfait was updated to

1.0.5 - Chance was updated to

2.3.0 - Chess was updated to

v2.5.0 - Clash Meta For Android was updated to

2.11.28.Meta - Clock was updated to

2.30.1 - Clock by vayunmathur was updated to

v2.5.0 - Cloudbase Predictor was updated to

1.5.0 - CO3 was updated to

B0.0.9 - Colota - GPS Location Tracker was updated to

1.10.0 - Concert Diary was updated to

1.0.2 - ConnectBot was updated to

1.10.7-oss - ConsoleFlow was updated to

2.2.6 - Contacts was updated to

v2.5.0 - Cuscon was updated to

4.1.0.0 - DAVx⁵ was updated to

4.5.13-ose - DeepL was updated to

9.6 - Delta Icon Pack was updated to

2.16.0 - Dima Defense ⚔️ was updated to

0.6.2 - Discover Ads Filter was updated to

1.1.3 - disky - Find your biggest diskspace thieves! was updated to

2.2.0 - Dragon Launcher was updated to

3.2.2 (Worm) - Drinkable was updated to

1.59.1 - DuckDuckGo Privacy Browser was updated to

5.281.1 - DuressKeyboard was updated to

6.4 - DuressKeyboardLite was updated to

7.0 - Element X - Secure Chat & Call was updated to

26.05.2 - EnigmaDroid was updated to

1.9.2 - Equalizer314 was updated to

0.0.10-beta - eQuran was updated to

3.4.0-beta.4 - EUC State Of Health Analyzer was updated to

1.53 - FairScan – PDF Scanner was updated to

1.23.0 - FastTimes was updated to

1.0.4 - Fatto was updated to

0.6.8 - Fennec F-Droid was updated to

151.0.2 - Finalbenchmark 2 - CPU Test was updated to

1.0.2 - Find Family was updated to

v2.5.0 - Fitness Calendar was updated to

2026.05.1 - Flare was updated to

1.5.1 - Flash Alert (Calls & SMS) was updated to

2.6.4 - Flash Deck was updated to

1.11.0 - Flexify was updated to

2.1.82 - Fluffy was updated to

4.2.1 - Flux News was updated to

2.1.0 - Fokus Launcher was updated to

1.6.4 - Forkyz was updated to

83 - FreeOTP+ was updated to

3.4 - Geekttrss was updated to

1.6.10 - GeoShare: Jump Between Maps was updated to

6.3.1 - GeoWeather was updated to

1.8.1 - GitSync was updated to

1.8.59 - Gizz Tapes was updated to

KGLW - GLPI Agent was updated to

1.8.0 - GPN 2026 Schedule was updated to

1.76.0-GPN-Edition - Hammer was updated to

3.1.2 - Haven SSH Client was updated to

5.59.21 - Headwind MDM Agent was updated to

6.36 - HFUT-Schedule was updated to

4.20.5.2 - Hidroly: Water Reminder was updated to

2.1.0 - Holos was updated to

1.8.0 - Home Medkit was updated to

1.9.7 - Idle Fantasy was updated to

1.7.9 - Immich Uploader was updated to

2.2.2 - Infomaniak Euria was updated to

1.4.2 - Infomaniak Mail was updated to

1.29.1 - Jamu was updated to

0.1.5 - JetBird was updated to

1.6.3 - K-9 Mail was updated to

19.1 - Kai 9000 was updated to

2.7.0 - KashCal was updated to

23.7.85 - KDE Connect was updated to

1.35.8 - KeePassDX Passkey Vault was updated to

4.4.3 - KeinPlan was updated to

26.06.01 - Kompact Image Compressor was updated to

1.0.9 - Kreate was updated to

2.2.0-fdroid - Kvaesitso was updated to

1.40.2-fdroid - Kwik EFIS was updated to

8.00 - Kwik EFIS (E-Ink) was updated to

8.00 - Lalumo was updated to

7.2 - Last Launcher was updated to

1.1.0 - Launcher314 was updated to

0.0.16-beta - LibreFit was updated to

0.3.1 - LibreTube was updated to

31.4 - Linwood Butterfly Nightly was updated to

2.5.3-rc.1 - Linwood Flow Nightly was updated to

0.6.0 - Lissen: Audiobookshelf client was updated to

1.10.1-release - Litube was updated to

v2.1.4 - Local Player was updated to

1.0.11 - LocalShare was updated to

0.2.9 - LoloTrans was updated to

2.1 - Lotus was updated to

1.6.1-community - Luanti was updated to

5.16.1 - LunaChron was updated to

2.32.1 - Lune was updated to

1.3.0 - Lux Alarm was updated to

2.2.2 - Mages was updated to

4.7.5 - Mako was updated to

2026.41 - MarketMonk was updated to

1.0.52 - Markleaf was updated to

2.16.2 - Materialious was updated to

1.16.30 - MediLog (Non-reproducible build) was updated to

3.6.5 - MediLog (Reproducible build) was updated to

3.6.5 - MedTimer: Med & Pill Reminder was updated to

1.23.0 - Mercurygram was updated to

12.7.3.3 - Mindwtr - GTD Task Manager was updated to

0.9.7 - Minimal Kernel Manager was updated to

1.4 - Miniter was updated to

0.6.3 - Money Manager Ex was updated to

5.5.8 - monocles chat was updated to

2.2.2 - Mooneva Cycle was updated to

1.6 - MTG Familiar was updated to

3.9.16 - Music was updated to

v2.5.0 - MusicSearch was updated to

1.119.1 - My Expenses was updated to

4.0.9.1 - My Price Log was updated to

0.3.2 - NATINFo+ was updated to

1.7.2 - NClientV3 was updated to

4.1.2-release - NekoVideo was updated to

1.3.3 - NerdCalci was updated to

4.7.3 - Network Survey was updated to

1.55 - Nextcloud was updated to

33.1.2 - Nextcloud Pantry was updated to

0.14.0 - NextGIS Mobile was updated to

3.0.4 - Nontrinsic was updated to

2026.05.30 - Nora was updated to

0.7.7 - Nori was updated to

0.1.6 - NotallyX - Quick Notes/Tasks was updated to

7.11.2 - Notes was updated to

v2.5.0 - Notes (Privacy Friendly) was updated to

2.2.1 - NymVPN – Anonymous No-Log VPN was updated to

v3.5.0 - Offi was updated to

14.0.5 - Office Break was updated to

0.8.3 - Offline Translator was updated to

0.6.2 - Oinkoin was updated to

1.7.7 - OneTwo was updated to

2.1.4 - Onloc was updated to

1.2.4 - Open Passkey Authenticator was updated to

2.1.5 - OpenAssistant was updated to

v2.5.0 - openHAB Beta was updated to

3.20.4-beta - openScale was updated to

3.1.1 - OpenTopoMap Viewer was updated to

1.29.1 - Organic Maps・Offline Map & GPS was updated to

2026.05.27-11-FDroid - OSMfocus Reborn was updated to

1.9.12-fdroid - OSS-Dict was updated to

2.0.6 - P2Play - Peertube client was updated to

0.10.1 - Pachli for Mastodon was updated to

3.7.0 - Pagan was updated to

1.8.13 - PAIesque was updated to

76 - Paperless NGX Uploader was updated to

1.8.3 - PasswdSafe was updated to

6.27.2 - PDF was updated to

v2.5.0 - PennyWise AI was updated to

2.15.59 - Peristyle was updated to

v9.6.3 - Personal Stuff was updated to

1.4.0 - personalDNSfilter was updated to

1.50.60.7 - Photos was updated to

v2.5.0 - PicGuard was updated to

5.5.3 - Pill Time was updated to

18.0 - Pineapple Lock Screen (OSS) was updated to

2.2.1-oss - PipePipe was updated to

5.1.1 - Podcini.X - Podcast instrument was updated to

11.2.2.8 - ProtonVPN - Secure and Free VPN was updated to

5.18.75.0 - PublicArtExplorer was updated to

2.0.0 - Punch-hole Download Progress was updated to

2.3.2 - Puzzle Games was updated to

1.0.13 - Quire was updated to

2026.06.02.209 - Quitter was updated to

1.1.26 - Read You was updated to

0.16.2 - Repertoire was updated to

5.0.0 - Resticopia was updated to

0.8.7 - Rhythm was updated to

5.0.398.1045-fdroid - RiPlay was updated to

0.7.83 - RomanDigital was updated to

3.0.2 - RSAF was updated to

4.0 - Rsync Server was updated to

0.9.11 - Ruffle was updated to

0.260602 - RustDesk was updated to

1.4.7 - Sapio was updated to

2.5.0 - Saracroche was updated to

4.1.1 - SchildiChat Next was updated to

0.11.3-ex_26_5_2 - ScoreHub - Game Score Tracker was updated to

1.11.0 - Screenshot Tile (NoRoot) was updated to

2.21.0 - SeekPrivacy was updated to

3.0.5 - SherpaTTS was updated to

3.2 - ShikiApp was updated to

alpha-0.7.0 - Simple Notes Sync was updated to

2.7.1 - SimpleTextEditor was updated to

1.29.2 - Sky Map was updated to

1.15.1:Saturn - Smart Edge: Sidebar & Gestures was updated to

1.3.5 - SmartTube F-Droid was updated to

31.73 - Snowflake Volunteer was updated to

1.9 - Sobuu was updated to

1.4.2 - SoundPod was updated to

1.2.0 - Standard Notes was updated to

3.201.28 - Sumire Japanese Keyboard Lite F-Droid was updated to

1.7.74-lite-fdroid - Summit for Lemmy and PieFed was updated to

1.82.5-fdroid - Super Productivity was updated to

18.8.0 - Supertonic TTS was updated to

3.1.4 - Suspension Setup was updated to

3.0.0 - SysLog was updated to

2.6.0 - Table Habit was updated to

1.24.5 - Taiga Mobile Nova was updated to

2.1.1 - TaskerHA was updated to

1.2.10 - The Light was updated to

4.02 - Thunderbird: Free Your Inbox was updated to

19.1 - Tilde Friends was updated to

0.2026.5 - Timety was updated to

1.4.1 - Tokn - MFA / 2FA authenticator was updated to

1.5.0 - Torrents Digger was updated to

1.2.3 - TourCount was updated to

3.7.8 - Traffic Light was updated to

2.18.2 - Trail Sense was updated to

8.0.1 - TransektCount was updated to

5.1.1 - Transport You was updated to

4.2 - Träwelldroid was updated to

2.25.0 - Tuta Mail was updated to

348.260526.0 - Unblock Jam was updated to

v2.5.0 - Unciv was updated to

4.20.10 - UnicodePad was updated to

2.18.0-fdroid - Universal Installer was updated to

1.8.1 - Unstoppable Crypto Wallet was updated to

0.48.3 - Urn was updated to

1.5.8 - Voice Offline Audiobook Player was updated to

26.5.3 - VoicePlus – Audiobook Player was updated to

1.22 - Weather: Cool and Hot was updated to

0.16 - WiFi Password Manager was updated to

1.13 - Wiki Fronted was updated to

r/50590-r-2026-05-28 - Wikipedia was updated to

r/50590-r-2026-05-28 - Wire • Secure Messenger was updated to

4.26.0 - Wispar was updated to

0.10.3 - WiVeWa was updated to

1.6.0 - Word Maker was updated to

v2.5.0 - XDYou was updated to

1.6.0 - xnotes was updated to

0.6.4 - XrayFA was updated to

1.6.1 - Xtra was updated to

2.56.2 - Yagni Launcher was updated to

0.7.3-alpha - YouPipe was updated to

v2.5.0 - 素扉 was updated to

1.5.2

Thank you for reading this week’s TWIF 🙂

Please subscribe to the RSS feed in your favourite RSS application to be updated of new TWIFs when they come up.

You are welcome to join the TWIF forum thread. If you have any news from the community, post it there, maybe it will be featured next week 😉

To help support F-Droid, please check out the donation page and contribute what you can.

12:00 AM

Marketing clerks [Seth Godin's Blog on marketing, tribes and respect]

Bookkeepers do important work. But a bookkeeper is not the head of accounting.

Marketers are responsible for anything the organization does that touches the market. But many people with ‘marketer’ in their title simply go to meetings and do tasks after the real work of marketing is already done.

Some tech companies have hundreds of people in their marketing department. Most of them are simply playing catch up, because the engineers are making all the powerful and leveraged marketing decisions.

Who is making the difficult decisions on your team? That’s the person who’s actually in charge of marketing.

Sunday 2026-06-07

11:00 AM

Pluralistic: Criticizing the everything machine (06 Jun 2026) [Pluralistic: Daily links from Cory Doctorow]

->->->->->->->->->->->->->->->->->->->->->->->->->->->->->

Top Sources:

None

-->

Today's links

- Criticizing the everything machine: It slices, it dices, it even makes paperclips!

- Hey look at this: Delights to delectate.

- Object permanence: Parliament v DRM; Colbert's commencement; Counterfeiting x luxury goods; Joule thief; Lean-back media.

- Upcoming appearances: Kansas City, LA, Menlo Park, Toronto, NYC, Edinburgh, South Bend.

- Recent appearances: Where I've been.

- Latest books: You keep readin' em, I'll keep writin' 'em.

- Upcoming books: Like I said, I'll keep writin' 'em.

- Colophon: All the rest.

Criticizing the everything machine (permalink)

"Gish Gallop" is the debating term for an opponent who makes so many claims that "it's impossible to address them in the time available" (it's named for Creationist Duane Gish, who was notorious for this tactic):

https://en.wikipedia.org/wiki/Gish_gallop

I think about the Gish Gallop whenever I'm asked to comment on AI.

Here's a recent example: last week, I had a pre-interview call with a radio producer who wanted me to come on a 13-minute segment to discusses "whether there's a problem with AI governance?"

I asked what the show meant by that: was it whether regulation of AI in commercial or public sector decision-making needed more oversight? Was it that the siting and provisioning of data-centers needed more democratic accountability? Was it that workers deserved more of a say in AI's impact on labor markets? Was it that customers and/or audiences should be able to opt out of AI customer service and AI slop? Was it about whether we needed some kind of system to prevent "runaway AI," in the event that we teach so many words to the word-guessing program that it wakes up, becomes God, and turns us all into paperclips?

"Oh," the producer said, "all of that."

In 13 minutes.

You see the problem, right? The AI industry has made so many claims about its past, present and future that it's almost impossible to have a reasonable critical conversation about it:

https://bsky.app/profile/petermiles.eurosky.social/post/3mnffjqczjs2t

Shortly after I did the radio show, a newspaper editor who'd heard my segment got in touch to ask me if I'd write an 800-word op-ed about the subject, and also, could I address claims that "AI is the next Industrial Revolution?"

In 800 words:

I keep finding myself on stages or panels where an AI-struck person says something like, "AI is the next industrial revolution. It will change everything we do. It will let anyone create important works of art. It will cure cancer. It will take us to space. It will solve the climate crisis."

Or sometimes it's an AI critic, but that person's criticism is really more "criti-hype," which is when you accept tech industry hype claims at face value, and then criticize them rather than questioning them:

https://peoples-things.ghost.io/youre-doing-it-wrong-notes-on-criticism-and-technology-hype/

AI criti-hype might ask what we'll do once AI takes all our jobs, or what we'll do when AI replaces the government or teachers or doctors, or what we'll do when AI can bypass our critical faculties and brainwash us or drive us all mad.

What do you say to that? I usually start by talking about whether there's any economic basis for keeping the AI servers running. AI is – by far – the money-losingest venture in human history, and it's practically impossible to overstate just how bad the AI business is. Not only does AI have terrible unit economics, those unit economics are getting worse over time:

https://pluralistic.net/2026/05/26/the-ai-will-continue/#until-morale-improves

AI's happiest customers cite cost-benefit calculations that depend on truly unimaginable subsidies from the AI companies, who are basically selling $100 bills for $5 apiece. It would be pretty amazing if you couldn't find people who'd extol the virtues of this arrangement. But when AI companies try to raise the price of those $100 bills to, say, $20 apiece, those ecstatic customers fly into a rage and start loudly proclaiming that AI is so inefficient that they will lose money on this arrangement:

Now, it shouldn't fall to me, a card-carrying member of the Democratic Socialists of America, to point out that capitalist enterprises require profits to be sustainable. You can't keep a business afloat by selling $100 bills for $5, nor for $20. You can't even make a profit selling $100 bills for $100 apiece! For a company to succeed, it needs to take in more than it expends.

AI is a money-furnace, and AI hustlers are clearly on the hunt for a way to force all of us to feed every dime we've got to it. Elon Musk's (now scuttled) gambit to make every pension saver in America bail out Grok (and Twitter, but at a mere $44b, the losses from Twitter are dwarfed by the titanic losses from Grok) was the most ambitious and shameless population-scale bag-holder scheme, but it's not the only one:

So before we ask about the capabilities AI will acquire in the future, we should at least give some consideration to the question of whether anyone will be willing to fund the development of those capabilities, and if so, where the money would come from? Likewise, before we ask whether AI can perform adequately in a job, we should at least consider the possibility that the company that sells that AI tool will be bankrupt in a year or two. When we fight about data-center buildout, we mostly talk about the (considerable) environmental downsides to them – but what about the question of what we will do with these data-centers after their owners go bankrupt, possibly even before they can be provisioned with electricity? How many laser-tag arenas do we actually need?

This is just one example of the questions that you could spend days unpacking, which make many of the other questions about AI a little silly. Like, even if you think there are limitless returns to scale for creating new AI capabilities, which means that if we keep the money-furnace burning it's only a matter of time until it powers a cure for cancer and the end of the climate emergency, how much money do we need to shovel into the furnace before that happens, and where will it come from? There are plenty of cancer researchers who have promising approaches they haven't been able to pursue due to funding shortfalls.

Unless there's some way to estimate how much money we have to give to AI companies before they cure cancer, we should at least consider the possibility that the true sum is "more money than exists now and that will ever exist." We should also consider that whatever benefits to cancer research that AI might deliver could come with a higher price-tag than the promising cancer research we're dropping because we can't find far more modest sums.

Likewise, it may be that the amount of CO2 that AI will generate atmosphere before it "solves climate change" will render Earth permanently unfit for humans, consuming the only habitable planet capable of sustaining human life in the known universe. I mean, I suppose that's one way to "solve" climate change, but it's a pretty drastic solution.

My next book (out later this month) is The Reverse Centaur's Guide to Life After AI. I wrote it because I was frustrated by other people demanding that I talk to them about AI, and then handing me 800 words or 13 minutes to address fifty nebulous, poorly supported claims about AI:

https://us.macmillan.com/books/9780374621568/thereversecentaursguidetolifeafterai/

Shortly after writing it, I turned it into a lecture:

https://pluralistic.net/2025/12/05/pop-that-bubble/#u-washington

Now that I'm about to go out on the road with the book, I find myself frustrated anew by the need to try and pull together a compact way to address the broad, incoherent claims the industry uses to keep its bubble inflated and the money furnaces roaring. The series of essays I've developed here on Pluralistic are part of that effort:

https://pluralistic.net/2026/05/27/unnecessariat/#rubbuts-stole-my-jerb

But it occurred to me that this whole enterprise of making sense of AI needs to be framed in the context of the messiness of AI itself, and AI boosters' overwhelming, promiscuous and disjointed Gish Gallop.

Hey look at this (permalink)

- What happens when your phone is confiscated at the airport https://www.theverge.com/report/944076/cbp-airport-phone-searches-seizure-minneapolis-activists

-

A Billionaire Explains Why American Business Now Feels like the Mafia https://www.thebignewsletter.com/p/a-billionaire-explains-why-american

-

These Republican Lawmakers Challenged Abortion Bans. Then They Faced Backlash. https://www.propublica.org/article/republicans-face-backlash-after-challenging-abortion-bans

-

Debbie Downer https://prospect.org/2026/06/05/debbie-downer-wasserman-schultz-florida-house-races/

-

Mechanical Pencil https://mechanical-pencil.com/

Object permanence (permalink)

#20yrsago UK Parliament report damns DRM, calls for limits https://web.archive.org/web/20060615115510/http://www.openrightsgroup.org/2006/06/05/launch-of-the-apig-report-on-drm/

#20yrsago Colbert’s Knox College commencement speech https://web.archive.org/web/20111228135413/http://departments.knox.edu/newsarchive/news_events/2006/x12547.html

#15yrsago Counterfeiting can be good for luxury goods sales https://web.archive.org/web/20110602061646/http://www.slate.com/id/2294927/

#15yrsago HOWTO make a Joule Thief and get all the power you’ve paid for https://www.instructables.com/Make-a-Joule-Thief/

#15yrsago School suspends student for refusing to remove personal animation from YouTube, threatens other students for petitioning on his behalf https://web.archive.org/web/20110603041200/https://www.theglobeandmail.com/news/national/toronto/student-cites-freedom-of-speech-after-suspension-for-online-videos/article2043954/

#5yrsago Recommendation engines and "lean-back" media https://pluralistic.net/2021/06/05/lean-back/#lean-forward

Upcoming appearances (permalink)

- Kansas City: Facing the Future (Woodneath Library Center), Jun 10

https://www.mymcpl.org/events/119655/facing-future-cory-doctorow -

LA: The Reverse Centaur's Guide to Life After AI with Brian Merchant (Skylight Books), Jun 19

https://www.skylightbooks.com/event/skylight-cory-doctorow-presents-reverse-centaurs-guide-life-after-ai-w-brian-merchant -

Menlo Park: The Reverse Centaur's Guide to Life After AI with Angie Coiro (Kepler's), Jun 21

https://www.keplers.org/upcoming-events-internal/cory-doctorow-2026 -

Toronto: TBA, Jun 23

-

NYC: The Reverse Centaur's Guide to Life After AI with Jonathan Coulton (The Strand), Jun 24

https://www.strandbooks.com/cory-doctorow-the-reverse-centaur-s-guide-to-life-after-ai.html -

Philadelphia: The Reverse Centaur's Guide to Life After AI with David Williams (Fitler Club/Philadelphia Citizen), Jun 25

https://www.eventbrite.com/e/cory-doctorow-book-event-tickets-1990110326559 -

Chicago: The Reverse Centaur's Guide to Life After AI with Rick Perlstein (Exile in Bookville), Jun 26

https://exileinbookville.com/events/50628 -

Edinburgh International Book Festival with Jimmy Wales, Aug 17

https://www.edbookfest.co.uk/events/the-front-list-cory-doctorow-and-jimmy-wales -

South Bend: An Evening With Cory Doctorow (Notre Dame), Oct 6

https://franco.nd.edu/events/2026/10/06/an-evening-with-cory-doctorow/

Recent appearances (permalink)

- Cory Doctorow's digital jail-break (DW In Focus)

https://www.dw.com/en/cory-doctorows-digital-jail-break/audio-77414035 -

Why the Internet Got Worse and What to Do About It (Jim Rutt) (RIP)

https://www.jimruttshow.com/cory-doctorow-3/ -

On Enshittification – and what can be done about it (Re:publica)

https://www.youtube.com/watch?v=KhINQgPMVSI -

EFFecting Change: How to Disenshittify the Internet (EFF, with Wendy Liu)

https://archive.org/details/effecting-change-enshittification -

The “Enshittification” of Everything (Bioneers)

https://bioneers.org/cory-doctorow-enshittification-of-everything-zstf2605/

Latest books (permalink)

- "Canny Valley": A limited edition collection of the collages I create for Pluralistic, self-published, September 2025 https://pluralistic.net/2025/09/04/illustrious/#chairman-bruce

-

"Enshittification: Why Everything Suddenly Got Worse and What to Do About It," Farrar, Straus, Giroux, October 7 2025

https://us.macmillan.com/books/9780374619329/enshittification/ -

"Picks and Shovels": a sequel to "Red Team Blues," about the heroic era of the PC, Tor Books (US), Head of Zeus (UK), February 2025 (https://us.macmillan.com/books/9781250865908/picksandshovels).

-

"The Bezzle": a sequel to "Red Team Blues," about prison-tech and other grifts, Tor Books (US), Head of Zeus (UK), February 2024 (thebezzle.org).

-

"The Lost Cause:" a solarpunk novel of hope in the climate emergency, Tor Books (US), Head of Zeus (UK), November 2023 (http://lost-cause.org).

-

"The Internet Con": A nonfiction book about interoperability and Big Tech (Verso) September 2023 (http://seizethemeansofcomputation.org). Signed copies at Book Soup (https://www.booksoup.com/book/9781804291245).

-

"Red Team Blues": "A grabby, compulsive thriller that will leave you knowing more about how the world works than you did before." Tor Books http://redteamblues.com.

-

"Chokepoint Capitalism: How to Beat Big Tech, Tame Big Content, and Get Artists Paid, with Rebecca Giblin", on how to unrig the markets for creative labor, Beacon Press/Scribe 2022 https://chokepointcapitalism.com

Upcoming books (permalink)

- "The Reverse-Centaur's Guide to AI," a short book about being a better AI critic, Farrar, Straus and Giroux, June 2026 (https://us.macmillan.com/books/9780374621568/thereversecentaursguidetolifeafterai/)

-

"Enshittification, Why Everything Suddenly Got Worse and What to Do About It" (the graphic novel), Firstsecond, 2026

-

"The Post-American Internet," a geopolitical sequel of sorts to Enshittification, Farrar, Straus and Giroux, 2027

-

"Unauthorized Bread": a middle-grades graphic novel adapted from my novella about refugees, toasters and DRM, FirstSecond, April 20, 2027

-

"The Memex Method," Farrar, Straus, Giroux, 2027

Colophon (permalink)

Today's top sources:

Currently writing: "The Post-American Internet," a sequel to "Enshittification," about the better world the rest of us get to have now that Trump has torched America. Third draft completed. Submitted to editor.

- "The Reverse Centaur's Guide to AI," a short book for Farrar, Straus and Giroux about being an effective AI critic. LEGAL REVIEW AND COPYEDIT COMPLETE.

-

"The Post-American Internet," a short book about internet policy in the age of Trumpism. PLANNING.

-

A Little Brother short story about DIY insulin PLANNING

This work – excluding any serialized fiction – is licensed under a Creative Commons Attribution 4.0 license. That means you can use it any way you like, including commercially, provided that you attribute it to me, Cory Doctorow, and include a link to pluralistic.net.

https://creativecommons.org/licenses/by/4.0/

Quotations and images are not included in this license; they are included either under a limitation or exception to copyright, or on the basis of a separate license. Please exercise caution.

How to get Pluralistic:

Blog (no ads, tracking, or data-collection):

Newsletter (no ads, tracking, or data-collection):

https://pluralistic.net/plura-list

Mastodon (no ads, tracking, or data-collection):

Bluesky (no ads, possible tracking and data-collection):

https://bsky.app/profile/doctorow.pluralistic.net

Medium (no ads, paywalled):

Tumblr (mass-scale, unrestricted, third-party surveillance and advertising):

https://mostlysignssomeportents.tumblr.com/tagged/pluralistic

"When life gives you SARS, you make sarsaparilla" -Joey "Accordion Guy" DeVilla

READ CAREFULLY: By reading this, you agree, on behalf of your employer, to release me from all obligations and waivers arising from any and all NON-NEGOTIATED agreements, licenses, terms-of-service, shrinkwrap, clickwrap, browsewrap, confidentiality, non-disclosure, non-compete and acceptable use policies ("BOGUS AGREEMENTS") that I have entered into with your employer, its partners, licensors, agents and assigns, in perpetuity, without prejudice to my ongoing rights and privileges. You further represent that you have the authority to release me from any BOGUS AGREEMENTS on behalf of your employer.

ISSN: 3066-764X

Just for Skeets and Giggles (6.6.26) [The Status Kuo]

A note to readers: On Monday, Facebook cut off my team’s ability to monetize our content, with no explanation or warning. We’re appealing but haven’t heard back. Meanwhile, we’re scrambling to make up for the sudden shortfall without having to make staffing cuts. I’m taking no salary while we try to recover from this blow.

I hate being under the thumb of these billionaire oligarchs and their AI algorithms that treat content creators like garbage. Your financial support of our work gives us greater independence from the big platforms and allows us to keep our content free for readers on fixed income or disability.

If you’ve been meaning to become a paid supporter, this would be an amazing time to upgrade and help. If you’re already a paid subscriber, thanks for the vote of confidence! My team will make it through this tough period, but we deeply appreciate any support readers can provide.

Sleepy Don was back as a theme this week, especially after Marco Rubio testified under oath that he’s never seen the president fall asleep. This was just the other day in the Oval Office:

New portrait for the $250 bill just dropped.

Speaking of that new bill, Seth Meyers had some thoughts on it and the rest of this week’s news.

Note: Xcancel links mirror Twitter without sending traffic. Some GIFs may load; just swipe them down. Issues? Click the gear on the Xcancel page’s upper right, select “proxy video streaming through the server,” then “save preferences” at the bottom. For sanity, don’t read the comments; they’re all bots and trolls. Won’t load? Paste the link into your browser and remove “cancel” after the X in the URL.

This is an old meme, but my friend sent it to me and I still laughed, so here it is again.

Jimmy Kimmel’s all out of Fs to give, and it’s pretty glorious. Here’s a recap of the week, and really these 16 months in hell too.

The cancellation of the Freedom 250 concert after most the musical acts backed out was highly embarrassing for the President, as I wrote earlier with this header image, if you missed it:

Instead of Milli Vanilli, may I suggest this pair.

Gurl, we know it’s you.

A frustrated Trump even said he’d do the concert himself.

And he gave us a quote for the ages, which fairly sums up the last decade:

Speaking of dumb things, it came out that part of the deal being negotiated with Iran includes a possible $300 billion reparations tab, to be paid by us. The public’s reaction:

A theme soon emerged from this revelation.

Give him the Nobel, stat.

In other revelations, Trump has been trading stocks in un-presidented amounts since taking office, while pumping the ones he owns in speeches and on social media—and paying $200 for failing to disclose his trades on time.

We don’t know how much he made, but it feels like this:

Movies pretty much have all the right references for times like these.

Or television.

Or viral clips:

It wasn’t all terrible news. A judge ordered Trump’s name off the Kennedy Center for the Performing Arts. And it might just be the start of taking it off everything.

If we don’t stop him soon, all of Washington, D.C. will begin to bear his mark.

That UFC ring though…

Let’s not forget what he wants us all to stop thinking about.

Trump said he would nominate Todd Blanche as the permanent AG, and Blanche got straight to work.

While Pete Hegseth has been blocking promotions for women and minorities, he showed us what real men running things look like.

We have a possible explanation for why he embarrassed himself in this fashion.

Not to be outdone, Kash Patel had another use for government property.

Texas GOP voters chose someone even more compromised than Trump as their Senate nominee.

In this age of obsequiousness and gross flattery, Pixar went political without saying it was going political.

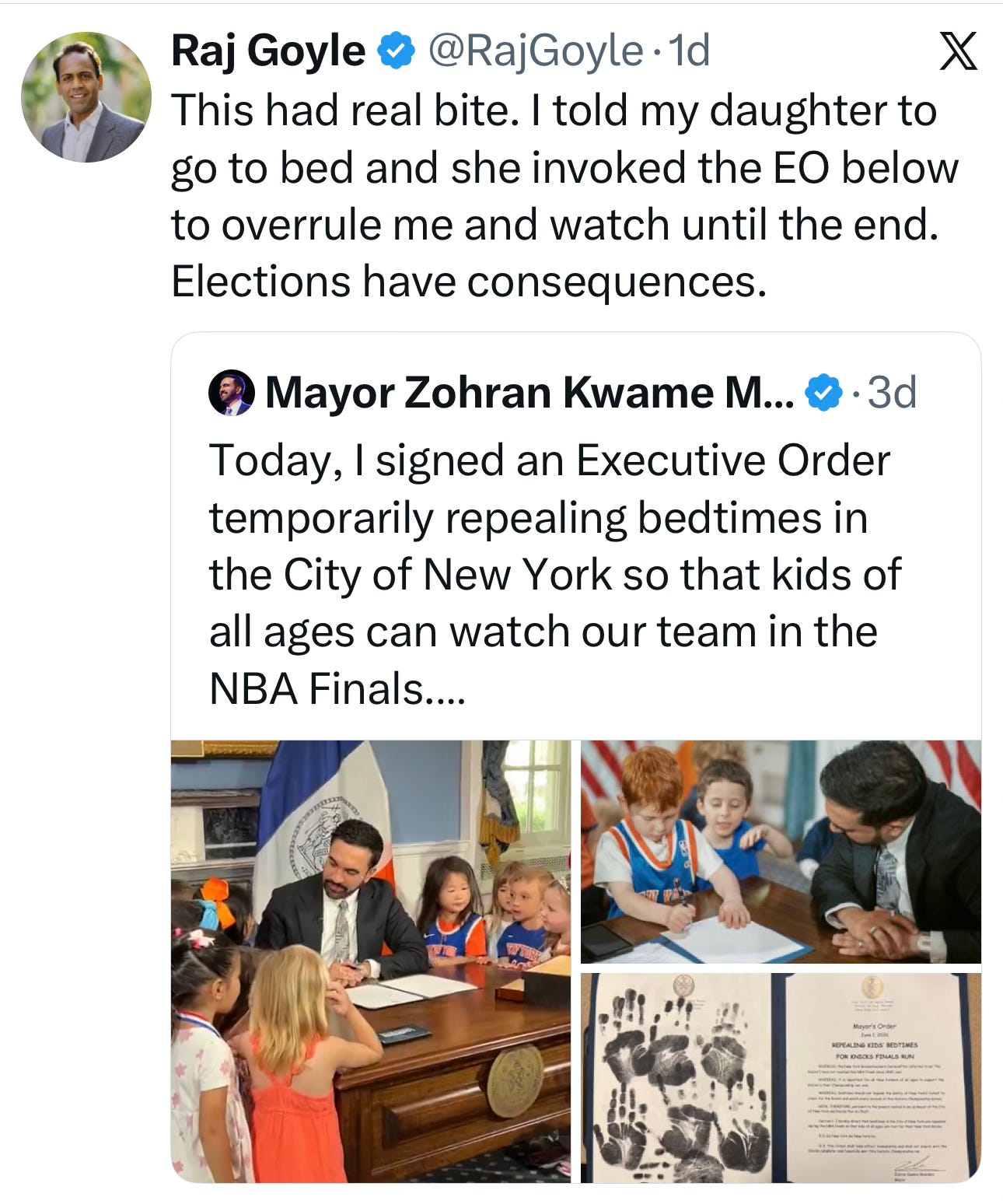

But Dem leaders know how to make us really smile.

I wrote about The Odyssey recently, and I guess the right, including Elon Musk, is up in arms about (checks notes) a Black Helen of Troy. Jimmy Kimmel on that meltdown:

Honestly, it explains why they were so mad about the Black Little Mermaid, too.

This did not go as I’d expected, but I completely get it!

Trust is learned over time. So far, my corgi, Windsor, remains skeptical, but these doggos are all in!

Like I said, trust must be earned.

I couldn’t stop laughing at the conversation here.

Some pups are just natural conversationalists.

I have not attempted this ring toss with my corgi yet, but given that I can barely get her harness on due to all the wriggling, I do not think it would go well.

I also have not attempted this toss with my dog.

I was transfixed by this moment, even though I knew how it would end. ❤️

Too soon for an R. Kelly reference with this kitty?

Oh, wait, someone actually made an R. Kelly reference for this aerialist. (Kitty is fine, just a bruised ego.)

So I guess this was just a tit-for-cat interaction?

Oh, you sneaky bastard.

This precious baby!

This was like a physics lesson.

Fascinating creature, hilarious caption.

I didn’t even know they sneezed!

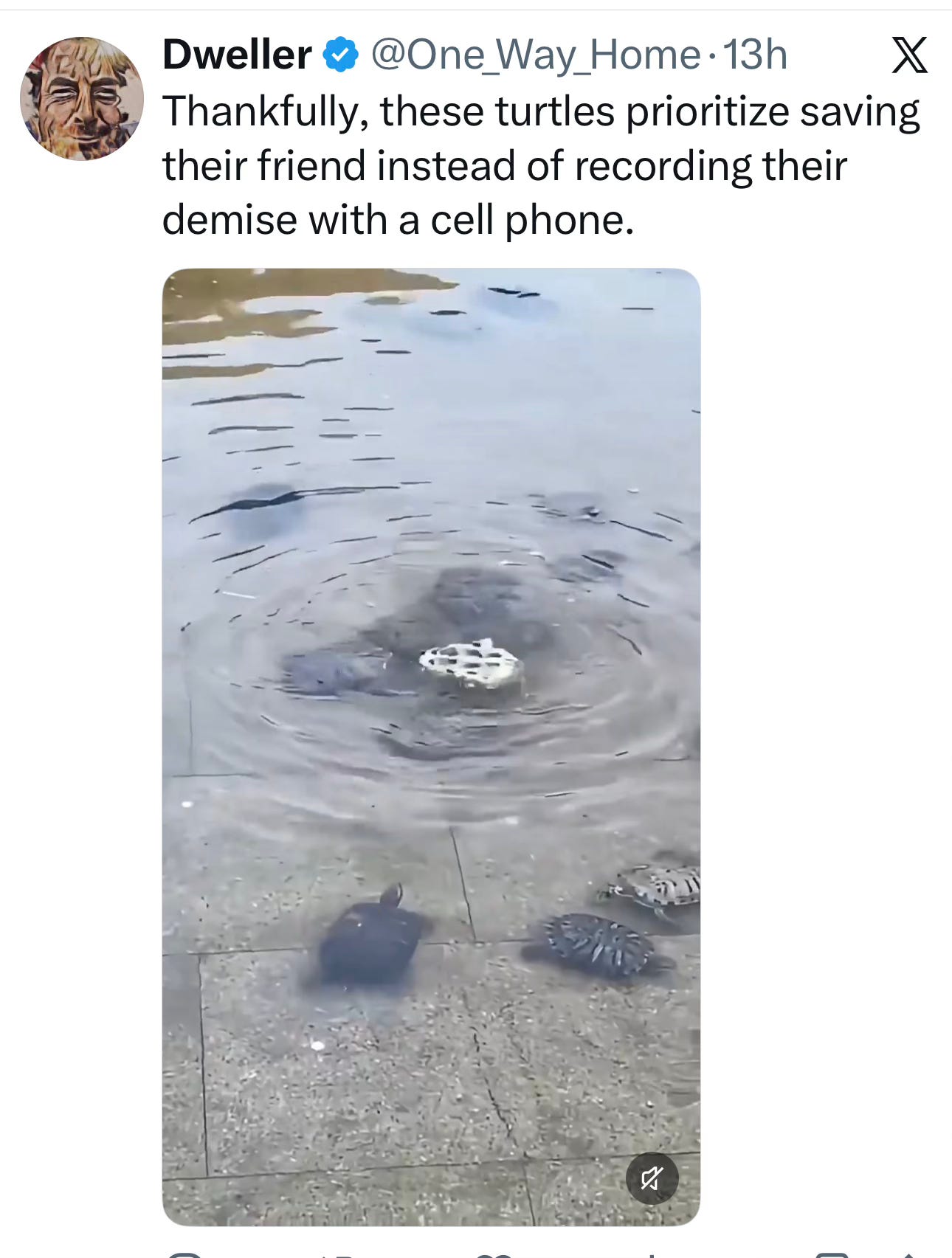

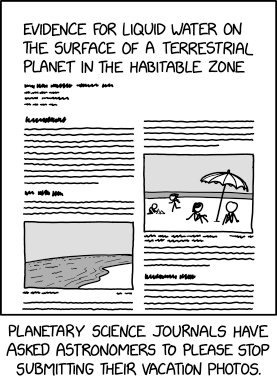

This was a sharp dig at humans.

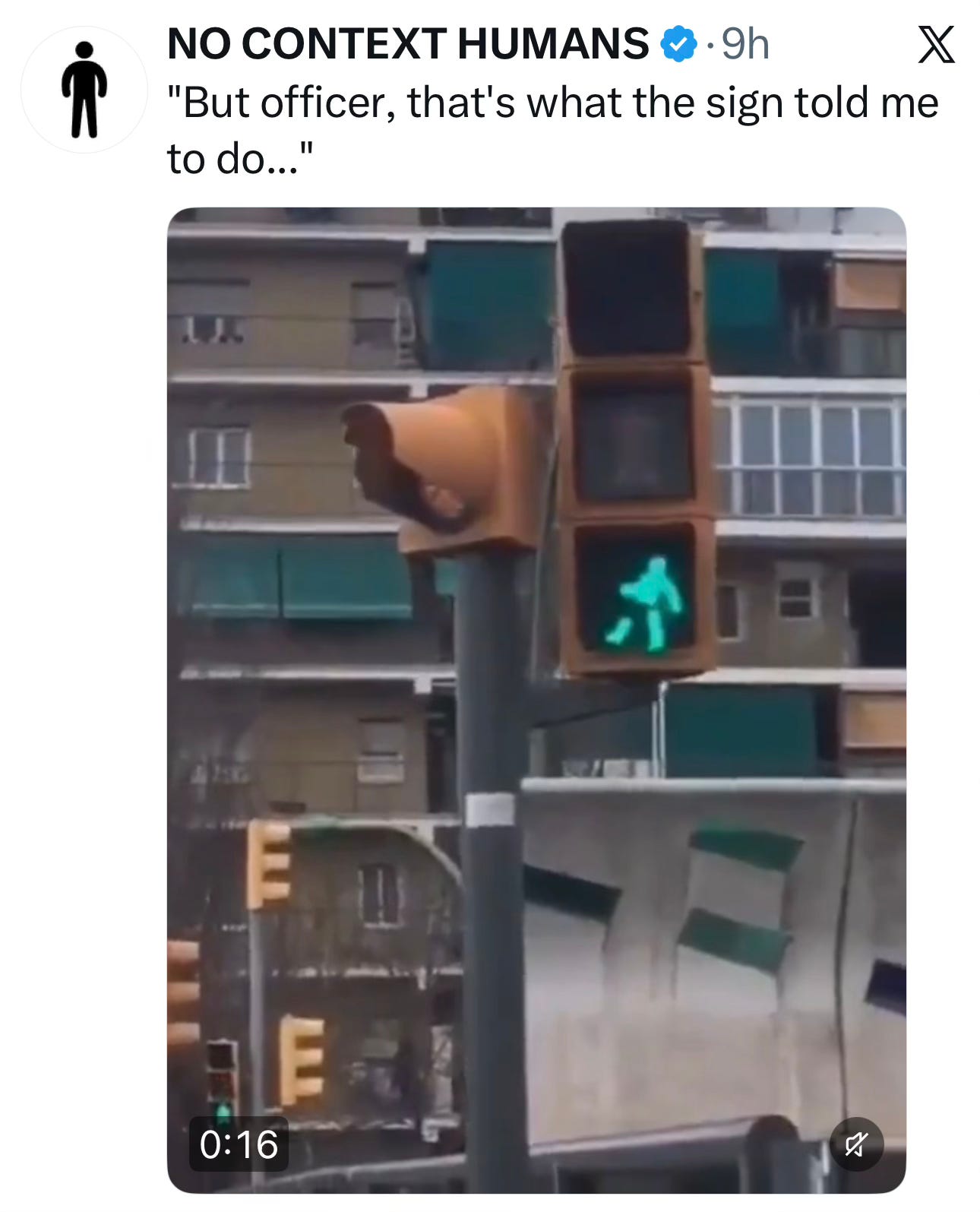

Speaking of humans and their documentary devices:

Dionne Warwick has game.

France prevailed in the game but still rioted in the streets… in a unique way.

From closer up:

People nowadays.

If this is AI, it’s really good AI, but after some digging, I still think it’s real and quite something.

My sister sent me this.

The end times are nigh.

Many readers here will sympathize.

One of the better uses of the technology I’ve seen.

Grammar. Very important.

I debated including this, but come on.

Placement is everything.

This would totally be me.

I agree! My kids’ laughs are a balm for the soul. Try not to smile at these!

The clip of the week goes to Samuel, who has devoted himself so fully to this art form that he is likely the best in the world at it. Yay, Samuel!

I didn’t come across a great dad joke to end this collection. The omission is ap-parent.

Have a great weekend!

Jay

09:00 AM

Kanji of the Day: 課 [Kanji of the Day]

課

✍15

小4

chapter, lesson, section, department, division, counter for chapters (of a book)

カ

課題 (かだい) — subject

課長 (かちょう) — section manager

課税 (かぜい) — taxation

放課後 (ほうかご) — after school (at the end of the day)

課程 (かてい) — course

課金 (かきん) — charges

総務課 (そうむか) — general affairs section

非課税 (ひかぜい) — tax-exempt

企画課 (きかくか) — planning section

日課 (にっか) — daily routine

Generated with kanjioftheday by Douglas Perkins.

Kanji of the Day: 辱 [Kanji of the Day]

辱

✍10

中学

embarrass, humiliate, shame

ジョク

はずかし.める

雪辱 (せつじょく) — vindication of honor

屈辱 (くつじょく) — disgrace

侮辱 (ぶじょく) — insult

屈辱的 (くつじょくてき) — humiliating

雪辱戦 (せつじょくせん) — return match

侮辱罪 (ぶじょくざい) — defamation (i.e., slander, libel)

恥辱 (ちじょく) — disgrace

辱める (はずかしめる) — to put to shame

国辱 (こくじょく) — national disgrace

陵辱 (りょうじょく) — insult

Generated with kanjioftheday by Douglas Perkins.

05:00 AM

This Week In Techdirt History: May 31st – June 6th [Techdirt]

This Week in 2016

- Independent Musician Sues Justin Bieber & Skrillex For Copyright Infringement… Over A Sample They Didn’t Use

- Oracle’s Lead Lawyer Against Google Vents That The Ruling ‘Killed’ The GPL

- Top Internet Companies Agree To Vague Notice & Takedown Rules For ‘Hate Speech’ In The EU

- This Is Bad: Court Says Remastered Old Songs Get A Brand New Copyright

- Investigation Shows GCHQ Using US Companies, NSA To Route Around Domestic Surveillance Restrictions

- Another Court Says Law Enforcement Officers Don’t Really Need To Know The Laws They’re Enforcing

This Week in 2011

- NBC News Produces Propaganda Video Highlighting NBC’s Views On Domain Seizures

- Hacking Egypt For Better Democracy

- Senators Want To Put People In Jail For Embedding YouTube Videos

- Talking About Why The PROTECT IP Act Is Bad News…

- RIAA Wants To Put People In Jail For Sharing Their Music Subscription Login With Friends

- Entertainment Industry Lawyer: The Public Domain Goes Against Free Market Capitalism

This Week in 2006

- War Propaganda Is Fun On Your Xbox 360

- How The Entertainment Industry Plans To Kill DVDs

- Can The Internet Destroy The Two Party Political System?

- Forget Astroturf, Fake Net Neutrality Commenters Popping Up Like Weeds

- Regulation For Regulation’s Sake

- DOJ Can’t Get Net Firms To Agree On Data Retention; Expect Legislation

Saturday 2026-06-06

11:00 PM

Real artists… [Seth Godin's Blog on marketing, tribes and respect]

Real artists do all the painting themselves, not like Rembrandt

Real artists use brushes, not technology like Cartier-Bresson

Real writers write it out by hand, not like Jack Kerouac

Real musicians record it live, not like Steely Dan

Real singers sing without processing, not like Kanye West and Daft Punk

Real directors do the prep without AI, not like Martin Scorsese

It turns out that real artists have always used technology. What they have in common is intent, responsibility, and the ability to create a feeling in the audience.

“Here, I made this.”

YouTube Processed 2.5 Billion Content ID Copyright Claims in 2025 [TorrentFreak]

To protect rightsholders, YouTube regularly removes, disables, or demonetizes videos that contain allegedly infringing content.

To protect rightsholders, YouTube regularly removes, disables, or demonetizes videos that contain allegedly infringing content.

For years, little was known about the scope of these copyright actions, but that changed in late 2021 when the streaming platform published its first-ever copyright transparency report.

This report and the subsequent updates have shown that roughly 99% of all copyright claims on YouTube are handled through the Content ID system. Since most claims are automated, without any human intervention, access to this powerful removal tool is restricted to a few thousand formally approved rightsholders.

2.5 Billion Claims

YouTube’s latest Transparency Report shows that the number of automated claims continues to rise. In 2025, the platform processed 2,502,941,368 Content ID claims, up 14% from 2.2 billion the year before.

Of the approved 7,626 rightsholders who currently have access to the system, 4,454 actively used it. These numbers are both slightly down from last year. YouTube doesn’t provide a specific reason, but notes that access can be revoked as part of regular evaluations.

“To keep the ecosystem safe, we regularly evaluate partners’ access to CID to ensure they demonstrate an ongoing need for scaled rights management. In some cases, these evaluations may result in removing a partner’s access to Content ID and matching them with a more appropriate copyright management tool,” the transparency report reads.

As clearly shown above, the number of rightsholders participating in the Content ID system pales in comparison to the 295,531 rightsholders who filed removal requests through the standard webform, or the 173,338 that used the automated Copyright Match Tool.

Nonetheless, Content ID’s 4,454 active rightsholders were responsible for 99.48% of all copyright actions on the video streaming platform. Compared to earlier years, the automated Content ID takedowns continues to increase, both relatively in percentages and in absolute numbers.

Millions of Disputed Claims

As with any takedown tool, uploaders and third-party rightsholders are not always in agreement. In fact, there are millions of Content ID disputes every year.

YouTube reports that of all Content ID claims, uploaders have disputed 12,840,608, or 0.51% of the total. That’s a relatively small percentage but still a rather large absolute number. For comparison, uploaders appealed 9.9% of all webform removals, which translates to little over 267,000 counter-notices.

In 2024, uploaders won 70% of disputes. In 2025 that figure dropped slightly to 67.42%. However, those who decided to challenge the rejection though YouTube’s process, won their appeal 75% of the time.

In 2024, uploaders won 70% of disputes. In 2025 that figure dropped slightly to 67.42%. However, those who decided to challenge the rejection though YouTube’s process, won their appeal 75% of the time.

The flow chart on the right illustrates the full appeals process.

Not all disputes are resolved though YouTube’s internal Content ID process. If uploaders persist that their content was erroneously claimed, while rightsholders argue the opposite, YouTube will reinstate the video, at which point rightsholders have to take the matter to court.

In 2025, 10,698 claims reached this stage, but fewer than 1% of these resulted in a lawsuit, YouTube notes.

Outside the Content ID system, YouTube also flags abuse of its DMCA takedown webform as a problem. In 2025, more than 6% of all these removal requests were believed to be “a likely false assertion of copyright ownership” by YouTube’s review team.

“The attempted abuse rate through the webform was more than 10 times higher than the attempted abuse rate across all other copyright removal tools,” the transparency report notes.

A $12 Billion Revenue Machine

While YouTube’s Content ID can be a significant source of frustration for uploaders, it has become a substantial revenue stream for rightsholders. Instead of removing infringing content, rightsholders chose to monetize over 90% of all Content ID claims in 2025.

YouTube reports that cumulative ad revenue paid to rightsholders through Content ID has now exceeded $12 billion since the system launched. That figure includes data up to December 2024 and will likely be billions higher today.

It is clear that not being present on YouTube at all is no longer an economically wise decision. On the contrary, for some rightsholders a viral infringing upload is no longer a problem, but a revenue opportunity intstead.

From: TF, for the latest news on copyright battles, piracy and more.

01:00 PM

‘Tomb Raider’ Remake Developed Using Some AI, Everyone Freaks, Crystal Dynamics Responds, And I’m Confused [Techdirt]

Full disclosure: this post is going to pose way more questions than answers. That’s because the story of the Tomb Raider remake being produced by Crystal Dynamics and its inclusion of an AI disclosure on Steam makes no sense to me.

So, let’s start at the beginning. Crystal Dynamics is making an updated version of the first Tomb Raider game and it looks pretty great from what I’ve seen. But, as gamers are now accustomed to doing, the public came to notice that the game’s Steam page included one of Steam’s mandatory AI disclosure notices. It reads thusly:

AI-assisted tools were used during development to support some early exploration and temporary development content. Any AI-assisted assets were either replaced or refined by humans in order to maintain the creative and artistic vision of the development team.

And from there, because, of course, everyone freaked out. Comments from all the corners of the internet began flooding in, swearing off ever touching this game because it was developed using AI. What AI? We don’t know. How much was it utilized? No real content there, either. But is the game going to be good? It doesn’t fucking matter, because AI was used and that’s all you need to know in order to know that this game is going to be artless pap fit only to be mocked and laughed at.

Even some gaming journalists have gotten into the habit. This is from Kotaku:

GenAI slop potentially showing up in Tomb Raider is disappointing but maybe less surprising than it should be. Phil Rogers, CEO of Crystal Dynamics’ parent company, Embracer Group, last year called genAI a “powerful technology” for “driving efficiency.” Crystal Dynamics has also undergone several rounds of layoffs, completing three just last year and one earlier in 2026.

Here you have a writer who has already reached their conclusion while having almost zero information on which to base that conclusion. They haven’t played the game. They’ve barely seen the content in the game, save for some trailers. They don’t know thing one about how AI was used, where, and in what way. But it’s probably going to be “GenAI slop”. As if there is simply no other possible outcome.

But it gets at least slightly stranger with Crystal Dynamics having responded to some of those concerns over at Eurogamer.

“At Crystal Dynamics, we leverage AI tools to help our teams iterate on ideas faster and more efficiently, while ensuring that all finished content in the final product is human-crafted. Our goal is to empower the creativity and flexibility of our developers to deliver the highest-quality experiences for players everywhere.”

This is where I get confused. If all of the content that is going to make it into the final product is “human-crafted,” then they shouldn’t even have needed to add the disclosure to their Steam page. Back in January, Steam updated its rules around its AI disclosure such that a game with an AI disclosure must have AI-generated content that is either in public marketing materials for the game or in the final product and with which the player of the game interacts in order to require the disclosure.

In its submission form, Valve now specifies that game publishers must disclose pre-made generative AI assets only when used in marketing materials or content that “ships with your game, and is consumed by players.”

In other words, Steam’s disclosure requirement is not concerned with generative AI tools used behind the scenes for efficiency gains (presumably including coding helpers) or office work, but with things like final art, sound, and writing.

Now, I’ll just note that there is a subtle difference in the disclosure notice and Crystal Dynamics’ statement. The former indicates that the game may include AI-generated assets that were then iterated upon by a human developer. The latter seems to say the opposite, where everything in the final game will be “human-crafted”. So… which is it?

As I said from the start, more questions than answers is all I have at the moment. But if the gaming public is going to freak out at the mere mention of some AI being used in some way, somewhere within every new video game that comes out, then this is going to be a very annoying time in which to be a gamer.

11:00 AM

Pluralistic: Refining humanity (05 Jun 2026) [Pluralistic: Daily links from Cory Doctorow]

->->->->->->->->->->->->->->->->->->->->->->->->->->->->->

Top Sources:

None

-->

Today's links

- Refining humanity: What our technology is shows us what we're not.

- Hey look at this: Delights to delectate.

- Object permanence: GNU Radio; France v "follow us on Twitter"; Aaronsw vindicated; Capitalism's crooked refs.

- Upcoming appearances: Kansas City, LA, Menlo Park, Toronto, NYC, Edinburgh, South Bend.

- Recent appearances: Where I've been.

- Latest books: You keep readin' em, I'll keep writin' 'em.

- Upcoming books: Like I said, I'll keep writin' 'em.

- Colophon: All the rest.

Refining humanity (permalink)

One of the best ways to evaluate your own understanding of a subject is to attempt to explain it to someone else. Through explaining things, we discover how much of the "totally obvious" world is actually full of ambiguity, mystery and contradiction.

There's a great bit in Rowan Atkinson's historical sitcom Blackadder that illustrates this principle. In "Ink and Incapability" Blackadder and friends have accidentally burned the only copy of Samuel Johnson's original dictionary of the English language. To cover up their mistake, they decide that they will recreate the dictionary themselves. However, they founder on the first word they try to define, "A":

Blackadder: Let's start at the beginning, shall we? First: 'A.' How would you define 'A'?

Prince George: Ohh…'A' (continues this in background). Oh, I love this! I love this! Quizzies! Erm, hang on, it’s coming. Ooh, crikey, erm, oh yes, I’ve got it!

B: What?

PG: Well, it doesn’t really mean anything, does it?

B: Good. So we're well on the way, then. "'A'; impersonal pronoun; doesn't really mean anything."

I mean, what does "A" mean? The Oxford English Dictionary has more than a dozen definitions, and just the first one runs to more than 1,500 words:

Now, normal life involves a lot of explaining things to other people. You have to explain your problems to customer service reps, who have to explain why they can't solve those problems to you. You need to explain to your loved ones why you want to leave your toothbrush in the shower, and they have to explain why they hate having your toothbrush in the shower. These explanation-exchanges teach you as much as they teach the person you're locked in dialog with. The reasons for leaving your toothbrush in the shower may seem totally obvious to you, and your partner's inability to understand this reveals the assumptions you've never even considered.

For the past four decades, an increasing proportion of the population have spent an increasing proportion of their lives explaining things to machines that have no assumptions or shared context: computers. What we call "programming a computer" is really "breaking down a thing that seems obvious to you into increasingly simple instructions that will be followed to the letter."

Computers are like the genies of legend, bloody-minded literalists who will do exactly what you say, in the way that is perversely furthest from what you mean. To get a computer to do anything, you must first understand it to a degree that far exceeds the understanding needed to explain something to any other human, even a small child.

To take just one example: yesterday, I was on a plane, and the seatback video started cycling through its video-on-demand offerings. All of the movie titles that began with "the" were rewritten to put "the" at the end of the title (for example, "The Sting" was written as "Sting, The"). It's obvious why the system's designer had done this: we expect to find movies whose titles begin with "The" alphabetized under their second word ("The Sting" should appear between "Star Wars" and "Story of a Love Affair"; not between "The Godfather" and "The Untouchables").

I remember when I learned this from my elementary school's teacher-librarian, when I was seven and my class got a tutorial on the school library's card catalog. The librarian explained this principle to us in a matter of minutes, as part of a longer set of instructions, and still, it stuck with me forever.

But here we are, 48 years later, and we still haven't standardized a way to get computers to grasp this foundational principle of alphabetization. Many different databases handle this, to be sure, but it's so inconsistent across so many platforms that someone at the head-end of the video distribution system that feeds American Airlines' VOD system decided, "Fuck it, I'm just gonna put the 'The' at the end of these titles."

Computers are stupid, in other words, which means that the people who program them have to have smarts enough for both of them. Unfortunately for our entire species and civilization, the software industry has historically valued skill at writing efficient and reliable software over writing software that adequately reflects reality. There is an entire genre of lists that illustrate the problem with this; the "falsehoods programmers believe" lists:

https://github.com/kdeldycke/awesome-falsehood

From "names of people" and "street addresses"; from "prices" to "time"; from "email addresses" to "phone numbers"; the "awesome falsehoods" lists are awesome because they reveal how much subtlety and complexity is lurking in these seemingly simple and intuitive concepts. This subtlety and complexity might never emerge through the process of trying to teach a person about them, but when you try to teach a computer about them, you have to confront them in all their awesome fuggliness.

That's because humans have context, agency and flexibility. Sure, the person who designs a form with a blank for "name" might never have met a Malagasy person whose first name is Randriamananjararadofabesata, but in the pre-digital world, when Madagascar Slim met a public official who had to transcribe his name onto a paper form, that official could simply draw an arrow in the margin next to the "name" blank, turn the form over, and write out all 28 characters on the reverse:

https://en.wikipedia.org/wiki/Madagascar_Slim

Computers can't do this. If the programmer doesn't know about Malagasy first names, the computer doesn't know about them either, and the only person who can "teach" the computer about these names is a programmer with access to the code for the database, who has to manually alter the code, compile it, and distribute it to everyone who uses it.

This is partly why digitization has been accompanied by a rise in people asserting that they exist on spectrums rather than in binaries. There were always people whose names, genders, races, and other biographic "immutables" changed, or failed to fit within the blanks on the forms. When those people's realities ran up against failures in the system's abstractions, they could petition a bureaucrat to turn the paper over and write an explanatory note, or to write really small to fill in a blank:

https://pluralistic.net/2023/02/02/nonbinary-families/#red-envelopes

Getting a human official to turn the paper over and write something that didn't fit in the blank is a personal challenge. It requires that a subject convince the person who controls the form to make an exception. This isn't always easy, but officials on the front lines necessarily deal with reality, and they can't get their jobs done unless they're capable of interpreting the necessarily incomplete procedures they operate under to fit things as they really are.

But a computer doesn't have any agency or context or flexibility. If the computer says your name isn't valid, you can't argue the computer into accepting it. The only way to get a digital world to acknowledge your existence is to campaign for systemic change. A trans person might (with great difficulty, to be sure) convince the regional registrar to white-out an old X on one "gender" box and mark a new X in the other box. But the only way to make that change in a software system that has been programmed to treat the "gender" field as immutable is to change society itself.

In this way, computers are machines for teaching us what we don't know about ourselves. They require that we interrogate and faithfully recreate our personal tacit knowledge, and they require that our societies interrogate their tacit presumptions as well. When you are forced to turn your tacit knowledge into explicit knowledge, you're also forced to confront how many broken assumptions lurk inside your reasoning. At best, it's a clarifying process.

Computers don't just clarify what we know and how we organize our society: they also clarify what we are. There are lots of things that we have supposed that a computer would never do, because we believed that these things required something that only humans could do.

Take chess: there are more possible chess games than there are hydrogen atoms in the universe, so brute-forcing chess by running all possible games is a technological impossibility. The best human chess players do something we don't quite understand, mixing their recollections of previous games with rules-of-thumb about the best strategies, with "creativity" (whatever that is) that lets them spontaneously develop new strategies. We can easily get a computer to memorize all the known-good chess sequences and all the rules of thumb, but we don't know what "creativity" is, so we can't encode it as a series of instructions.

But thanks to breakthroughs in machine learning and its successor, "deep learning," we have created chess-playing software that can beat every human, partly by assaying gambits that we would term "creative" if they originated with a human player.

What we make of this new fact is controversial. For many people (myself included), this is a refinement: it tells me that behaviors that are indistinguishable from "creativity" can, at least some of the time, be created by mechanical processes, and the mere fact that a machine does something that appears "creative" doesn't mean that machines are human.

For others, the fact that a mechanical system can evince a behavior that we would call "creative" in a human doesn't mean that we defined "creativity" too broadly, it means that we defined "human" too narrowly, and now we have made a machine that is, at least partially, a person.

I think this is the wrong conclusion to draw, for reasons that Ted Chiang sets out with luminous brilliance in a recent Atlantic article entitled "No, Artificial Intelligence Is Not Conscious":

https://www.theatlantic.com/philosophy/2026/06/no-artificial-intelligence-is-not-conscious/687378/

(If you're hitting the paywall on that one and you're on Firefox, you can try my favorite trick: switch to "Reader Mode" and hit "reload" – your mileage may vary.)

For all the reasons Chiang articulates, I think that drawing the "personhood" line to include machines is a technical mistake, but it's worse than that. Admitting machines to the "personhood" club is a tactical mistake, on par with the mistake we made when we admitted corporations to the personhood club. We should absolutely consider expanding personhood to incorporate living things, including animals and ecosystems, but at the same time, we must purge these dead, artificial constructs from the club:

https://pluralistic.net/2026/04/15/artificial-lifeforms/#moral-consideration

There is a way in which the recognition of new capabilities in machines parallels the recognition of new capabilities in animals other than ourselves. When those animals manage to do things that we once thought were the exclusive province of humans, we (should) take that as an opportunity to refine our conception of humanity. We're not "the animals that use tools" or "the animals that make plans" or "the animals that recognize themselves in mirrors," because there are other animals that do those things. We are an "animal that uses tools"; not the animal that does so.

Likewise, if we thought that some activity was unique to humans, or to living beings, and we manage to get a machine to replicate that activity, we should revise our view of the activity – not our view of the machine. Creative breakthroughs in chess are not "a thing that requires a human mind," they're "things that can be done by human minds and by machines."

Edsger Dijkstra once famously asked "can a submarine swim?"

https://www.cs.utexas.edu/~EWD/transcriptions/EWD08xx/EWD898.html

Submarines and fish and humans and dolphins all propel themselves through water by different means. But when an animal swims, it does something that is different from what a submarine does. The submarine has no intention, while (complex multicellular) animals swim to pursue goals. Building machines that propel themselves through water is very useful, but it's not the same thing as creating life. In some ways, it's better than creating life: for one thing, we owe other living things moral consideration that is not due to machines. Harnessing a machine to accomplish our own goals is more morally clear than controlling living things to achieve those goals. By the same token, creating machines that can do some of the tasks that we ask of other humans can be the superior moral course. I'd rather have a machine remove mines from a minefield than getting humans to do it.

But beyond this moral relief, creating machines is a fantastic way to learn more about ourselves – making explicit our tacit knowledge, our implicit social assumptions, and the limitations of our conception of what sets us apart from the rest of the universe.

One way in which AI is exceptional is in how it undermines this principle. Conventional software techniques struggled to produce a program that could identify objects in photographs. It turns out that defining all the visual correlates of "cat" is even harder than defining the letter "A." Deep learning techniques solved this previous insoluble problem by relieving us of the job of making explicit all the implicit factors that we deploy when distinguishing an image of a "cat" from an image of a "dog" or a "tiger" (or a "tractor").

Instead of forcing humans to engage in introspection until we'd made a list of every factor we use to identify cat pictures, we simply identified pictures of cats and fed them to a program that tried to find the commonalities among them. The more pictures we fed to that program, the better it got at identifying cats. Today, we have programs that can reliably distinguish an image of a cat from an image of a tiger cub!

This represents a major breakthrough in the power of computers to perform useful work for us, but it's also a huge regression in computers' role in forcing us to make our tacit thought processes explicit through systematic introspection. That's probably fine: we didn't create computers to make us introspect, we created them to do useful work for us. All things considered, it might be better to have genies who grant our wishes according to the spirit of our words, not their letter.

AI may not force us to render our implicit thoughts as explicit instructions, but it absolutely forces us to reconsider and narrow the realm of the numinous. Our own creativity is still delightful and important, but the fact that this squishy, amazing process can (sometimes) be replicated by procedural machines changes the definition of living things. We're "a thing that can produce creative outcomes" but not "the things that can produce creative outcomes." The machines aren't being creative (any more than a submarine is swimming) but they're outputting things that we used to only achieve by means of creativity.

An AI that does something that used to require creativity is fulfilling my favorite of Brian Eno and Peter Schmidt's Oblique Strategies: "Be the first person to not do something that no one else has not done before":

https://stoney.sb.org/eno/oblique.html

Just as bosses fantasize about AI bringing about a worksite without workers, and Zuckerberg is trying to build social media without socializing, and politicians want a bureaucracy without bureaucrats, we can sometimes use AI to produce creative outcomes without creativity:

https://pluralistic.net/2026/05/27/unnecessariat/#rubbuts-stole-my-jerb

That isn't to say that AI art is any good. AI may produce things that are aesthetically interesting, but it can't produce things that mean anything:

https://pluralistic.net/2026/06/02/must-we-pretend/

But art isn't the only realm that we apply creativity to. There are plenty of outcomes that we've always believed we couldn't bring about without applying creativity. AI – like all software – is making us realize that an ingredient we once deemed uniquely essential turns out to have substitutes. AI can sometimes accomplish things without us explaining how we do them. That relieves us of a useful but difficult chore – but in so doing, it forces us (yet again!) to revisit what sorts of things are needed to do the things that matter to us, and therefore, what makes us special.

Hey look at this (permalink)

- EU plots long game against US digital supremacy https://www.politico.eu/article/eu-plots-long-game-against-us-digital-supremacy/

-

Open World Map: Digital Sovereignty for Game Creators https://luma.com/8nvmyatm

-

Enshittifier — replace AI with

https://enshittifier.wells.ee/

https://enshittifier.wells.ee/ -

AI is the greatest money-wasting scheme humanity has ever invented https://www.telegraph.co.uk/news/2026/06/04/ai-is-the-greatest-money-wasting-scheme-humanity-has-ever-i/?WT.mc_id=tmgoff_tw_post_scheme-humanity-has-ever-i/

-

Enshittification, Despotification, and the Open Internet https://www.liberalism.org/p/enshittification-despotification-and-the-open-internet

Object permanence (permalink)

#20yrsago GNU Radio: the universal, software-defined radio https://web.archive.org/web/20060613062355/https://www.wired.com/news/technology/1,70933-0.html

#15yrsago France bans “follow us on Twitter” from newscasts https://web.archive.org/web/20110606035424/http://www.zdnet.com/blog/facebook/france-bans-facebook-and-twitter-from-radio-and-tv/1559

#5yrsago Aaron Swartz, vindicated https://pluralistic.net/2021/06/04/aaronsw/#cfaa

#5yrsago Capitalism's crooked refs https://pluralistic.net/2021/06/04/aaronsw/#crooked-ref

Upcoming appearances (permalink)

- Kansas City: Facing the Future (Woodneath Library Center), Jun 10

https://www.mymcpl.org/events/119655/facing-future-cory-doctorow -

LA: The Reverse Centaur's Guide to Life After AI with Brian Merchant (Skylight Books), Jun 19

https://www.skylightbooks.com/event/skylight-cory-doctorow-presents-reverse-centaurs-guide-life-after-ai-w-brian-merchant -

Menlo Park: The Reverse Centaur's Guide to Life After AI with Angie Coiro (Kepler's), Jun 21

https://www.keplers.org/upcoming-events-internal/cory-doctorow-2026 -

Toronto: TBA, Jun 23

-

NYC: The Reverse Centaur's Guide to Life After AI with Jonathan Coulton (The Strand), Jun 24

https://www.strandbooks.com/cory-doctorow-the-reverse-centaur-s-guide-to-life-after-ai.html -

Philadelphia: The Reverse Centaur's Guide to Life After AI with David Williams (Fitler Club/Philadelphia Citizen), Jun 25

https://www.eventbrite.com/e/cory-doctorow-book-event-tickets-1990110326559 -

Chicago: The Reverse Centaur's Guide to Life After AI with Rick Perlstein (Exile in Bookville), Jun 26

https://exileinbookville.com/events/50628 -

Edinburgh International Book Festival with Jimmy Wales, Aug 17

https://www.edbookfest.co.uk/events/the-front-list-cory-doctorow-and-jimmy-wales -

South Bend: An Evening With Cory Doctorow (Notre Dame), Oct 6

https://franco.nd.edu/events/2026/10/06/an-evening-with-cory-doctorow/

Recent appearances (permalink)

- Cory Doctorow's digital jail-break (DW In Focus)

https://www.dw.com/en/cory-doctorows-digital-jail-break/audio-77414035 -

Why the Internet Got Worse and What to Do About It (Jim Rutt) (RIP)

https://www.jimruttshow.com/cory-doctorow-3/ -

On Enshittification – and what can be done about it (Re:publica)

https://www.youtube.com/watch?v=KhINQgPMVSI -

EFFecting Change: How to Disenshittify the Internet (EFF, with Wendy Liu)

https://archive.org/details/effecting-change-enshittification -

The “Enshittification” of Everything (Bioneers)

https://bioneers.org/cory-doctorow-enshittification-of-everything-zstf2605/

Latest books (permalink)

- "Canny Valley": A limited edition collection of the collages I create for Pluralistic, self-published, September 2025 https://pluralistic.net/2025/09/04/illustrious/#chairman-bruce

-